02 Oct 2018

New AI Research Scholarship for Department's Active Vision Lab

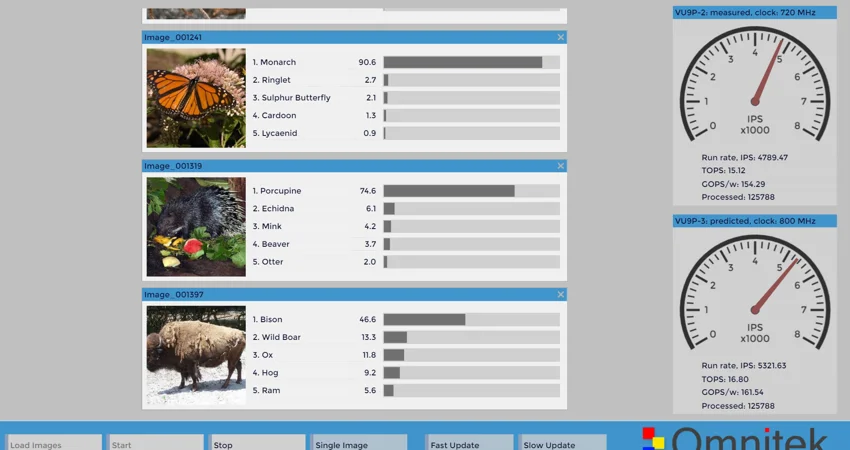

Omnitek, a leading designer of intelligent video and vision systems, today announced the start of a new DPhil (PhD) research sponsorship to study novel techniques for implementing deep learning acceleration on FPGAs (Field-Programmable Gate Arrays).

The Omnitek Oxford University Research Scholarship has been established to fund DPhil students to research novel compute architectures and algorithmic optimisations for machine learning.

Omnitek's media announcement explains, 'While in the short term the GPU has provided a convenient platform for early machine learning applications, multiple alternative compute engine architectures are now being developed specifically for deep learning. The rapid pace of development in neural network topologies and optimisation techniques for deep learning will quickly make even these technologies obsolete soon. Omnitek has recognised that in order to maintain its leadership position in machine learning with its FPGA-based Deep-Learning Processing Unit (DPU) it must invest in academic research at the highest level'.

The student, Marcelo Nascimento, who has a 1st Class degree from Oxford, will join the Department's Active Vision Laboratory under the supervision of Professor Victor Prisacariu, Associate Professor in Information Engineering.

Professor Prisacariu commented, “We are delighted to work with an industry leader in this field and very excited by the fact that Omnitek will be able rapidly to deliver the benefits of our research to its commercial customers through the reprogrammable FPGA platform”.

The work will be performed in collaboration with Omnitek’s own industry-based research to help shape the future of AI compute engines and the algorithms that run on them.

Roger Fawcett, CEO at Omnitek, commented “Oxford University with the Active Vision Laboratory was a natural choice for us since they are leading establishments in AI and we have employed many top graduates and postgraduates from Oxford University at Omnitek”.